包阅导读总结

1. 关键词:Cloudflare、Load Balancing、Private Traffic、Hardware Elimination、Enhancement

2. 总结:Cloudflare 不断推出新的负载均衡解决方案,支持多种网络层和流量类型,消除硬件成本,增强与平台的集成,实现端到端私有流量及设备流量支持,解决客户连接问题,提升灵活性和安全性。

3. 主要内容:

– Cloudflare 推出新负载均衡方案

– 2023 年支持本地流量管理(LTM)

– 今年支持通过 Spectrum 对私有网络的第 4 层负载均衡

– 最新支持端到端私有流量和 WARP 认证设备流量,无需专用硬件负载均衡器

– 现有负载均衡支持与挑战

– 支持多种负载均衡流量流

– 优势包括消除硬件成本和维护,全球部署,无限规模和容量

– 保持私有资源私有

– 之前层 4 负载均衡器连接公共网络,现可通过私有 IP 隔离

– 可结合 Magic WAN 确保流量私有和优化

– 支持分布式用户

– Cloudflare WARP 可用作到达私有 IP 地址配置的负载均衡器的入口

– 连接负载均衡与 Cloudflare One

– 集成现有服务与私有网络服务,为私有负载均衡器分配私有 IP 地址,灵活处理流量

思维导图:

文章地址:https://blog.cloudflare.com/eliminating-hardware-with-load-balancing-and-cloudflare-one

文章来源:blog.cloudflare.com

作者:Noah Crouch

发布时间:2024/7/16 14:02

语言:英文

总字数:1564字

预计阅读时间:7分钟

评分:90分

标签:负载均衡,Cloudflare One,私有网络,网络优化,网络安全

以下为原文内容

本内容来源于用户推荐转载,旨在分享知识与观点,如有侵权请联系删除 联系邮箱 media@ilingban.com

In 2023, Cloudflare introduced a new load balancing solution supporting Local Traffic Management (LTM). This year, we took it a step further by introducing support for layer 4 load balancing to private networks via Spectrum. Now, organizations can seamlessly balance public HTTP(S), TCP, and UDP traffic to their privately hosted applications. Today, we’re thrilled to unveil our latest enhancement: support for end-to-end private traffic flows as well as WARP authenticated device traffic, eliminating the need for dedicated hardware load balancers! These groundbreaking features are powered by the enhanced integration of Cloudflare load balancing with our Cloudflare One platform, and are available to our enterprise customers. With this upgrade, our customers can now utilize Cloudflare load balancers for both public and private traffic directed at private networks.

Cloudflare Load Balancing today

Before discussing the new features, let’s review Cloudflare’s existing load balancing support and the challenges customers face.

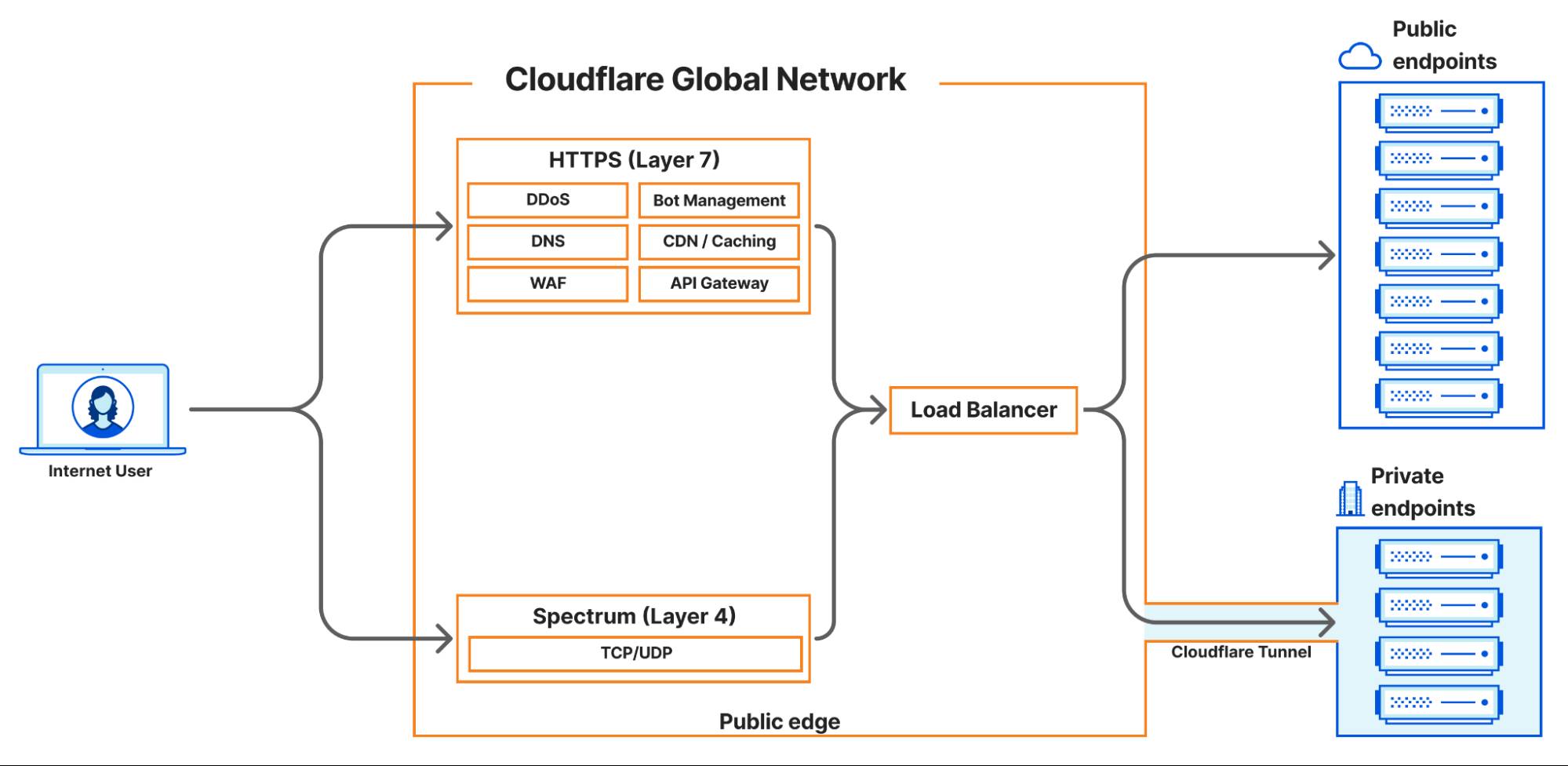

Cloudflare currently supports four main load balancing traffic flows:

-

Internet-facing load balancers connecting to publicly accessible endpoints at layer 7, supporting HTTP(S).

-

Internet-facing load balancers connecting to publicly accessible endpoints at layer 4 (Spectrum), supporting TCP and UDP services

-

Internet-facing load balancers connecting to private endpoints at layer 7 HTTP(S) via Cloudflare Tunnels.

-

Internet-facing load balancers connecting to private endpoints at layer 4 (Spectrum), supporting TCP and UDP services via Cloudflare Tunnels.

One of the biggest advantages of Cloudflare’s load balancing solutions is the elimination of hardware costs and maintenance. Unlike hardware-based load balancers, which are costly to purchase, license, operate, and upgrade, Cloudflare’s solution requires no hardware. There’s no need to buy additional modules or new licenses, and you won’t face end-of-life issues with equipment that necessitate costly replacements.

With Cloudflare, you can focus on innovation and growth. Load balancers are deployed in every Cloudflare data center across the globe, in over 300 cities, providing virtually unlimited scale and capacity. You never need to worry about bandwidth constraints, deployment locations, extra hardware modules, downtime, upgrades, or supply chain constraints. Cloudflare’s global Anycast network ensures that every customer connects to a nearby data center and load balancer, where policies, rules, and steering are applied efficiently. And now, the resilience, scale, and simplicity of Cloudflare load balancers can be integrated into your private networks! We have worked hard to ensure that Cloudflare load balancers are highly available and disaster ready, from the core to the edge – even when datacenters lose power.

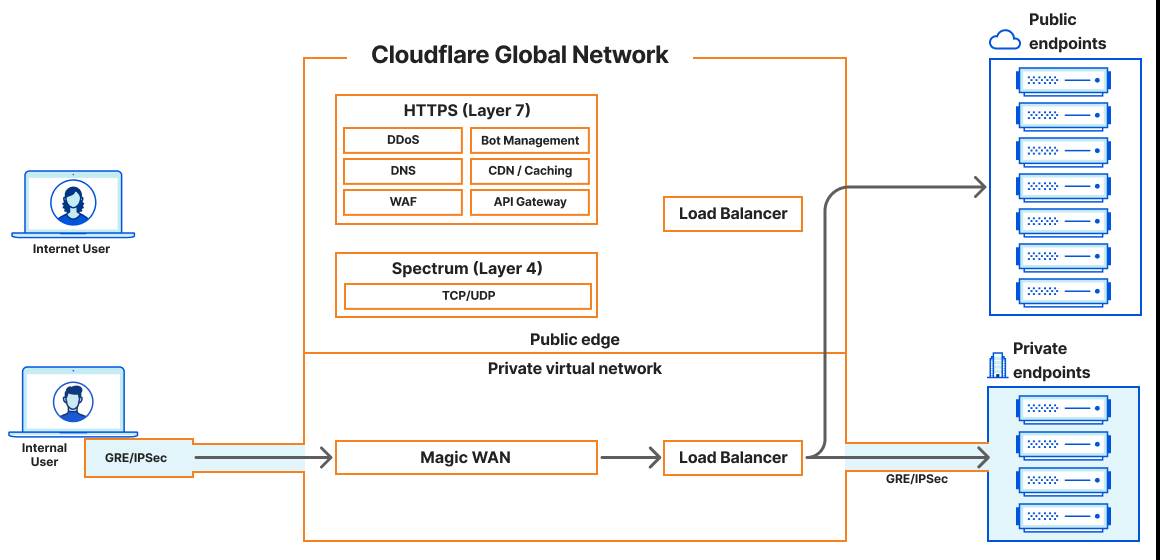

Keeping private resources private with Magic WAN

Before today’s announcement, all of Cloudflare’s load balancers operating at layer 4 have been connected to the public Internet. Customers have been able to secure the traffic flowing to their load balancers with WAF rules and Zero Trust policies, but some customers would prefer to keep certain resources private and under no circumstances exposed to the Internet. It’s been possible to isolate origin servers and endpoints this way, which can exist on private networks that are only accessible via Cloudflare Tunnels. And as of today, we can offer a similar level of isolation to customers’ layer 4 load balancers.

In our previous LTM blog post, we discussed connecting these internal or private resources to the Cloudflare global network and how Cloudflare would soon introduce load balancers that are accessible via private IP addresses. Unlike other Cloudflare load balancers, these do not have an associated hostname. Rather, they are accessible via an RFC 1918 private IP address. In the land of load balancers, this is often referred to as a virtual IP (VIP). As of today, load balancers that are accessible at private IPs can now be used within a virtual network to isolate traffic to a certain set of Cloudflare tunnels, enabling customers to load balance traffic within their private network without exposing applications to the public Internet.

The question you might be asking is, “If I have a private IP load balancer and privately hosted applications, how do I or my users actually reach these now-private services?”

Cloudflare Magic WAN can now be used as an on-ramp in tandem with Cloudflare load balancers that are accessible via an assigned private IP address. Magic WAN provides a secure and high-performance connection to internal resources, ensuring that traffic remains private and optimized across our global network. With Magic WAN, customers can connect their corporate networks directly to Cloudflare’s global network with GRE or IPSec tunnels, maintaining privacy and security while enjoying seamless connectivity. The Magic WAN Connector easily establishes connectivity to Cloudflare without the need to configure network gear, and it can be deployed at any physical or cloud location! With the enhancements to Cloudflare’s load balancing solution, customers can confidently keep their corporate applications resilient while maintaining the end-to-end privacy and security of their resources.

This enhancement opens up numerous use cases for internal load balancing, such as managing traffic between different data centers, efficiently routing traffic for internally hosted applications, optimizing resource allocation for critical applications, and ensuring high availability for internal services. Organizations can now replace traditional hardware-based load balancers, reducing complexity and lowering costs associated with maintaining physical infrastructure. By leveraging Cloudflare load balancing and Magic WAN, companies can achieve greater flexibility and scalability, adapting quickly to changing network demands without the need for additional hardware investments.

But what about latency? Load balancing is all about keeping your applications resilient and performant and Cloudflare was built with speed at its core. There is a Cloudflare datacenter within 50ms of 95% of the Internet-connected population globally! Now, we support all Cloudflare One on-ramps to not only provide seamless and secure connectivity, but also to dramatically reduce latency compared to legacy solutions. Load balancing also works seamlessly with Argo Smart Routing to intelligently route around network congestion to improve your application performance by up to 30%! Check out the blogs here and here to read more about how Cloudflare One can reduce application latency.

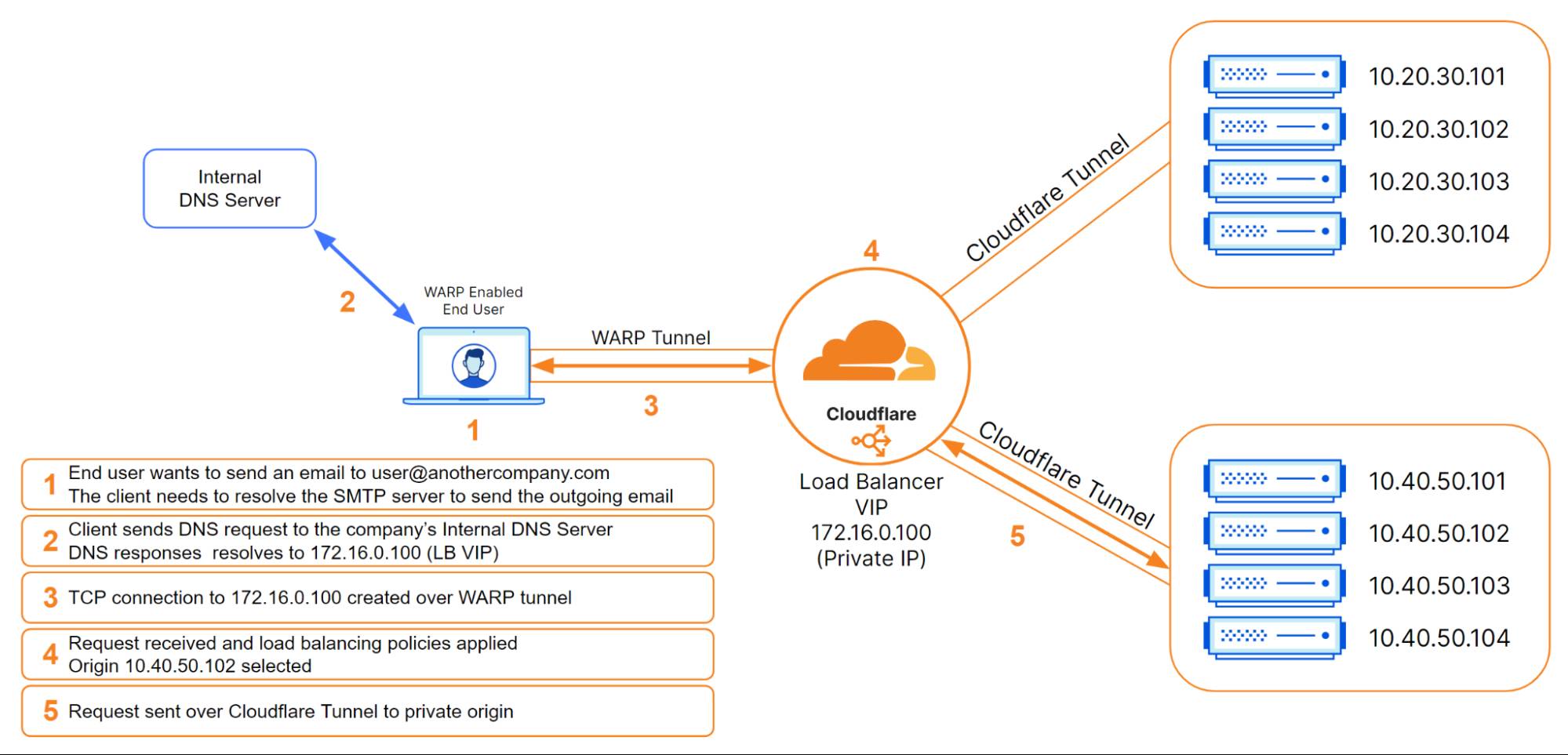

Supporting distributed users with Cloudflare WARP

But what about when users are distributed and not connected to the local corporate network? Cloudflare WARP can now be used as an on-ramp to reach Cloudflare load balancers that are configured with private IP addresses. The Cloudflare WARP client allows you to protect corporate devices by securely and privately sending traffic from those devices to Cloudflare’s global network, where Cloudflare Gateway can apply advanced web filtering. The WARP client also makes it possible to apply advanced Zero Trust policies that check a device’s health before it connects to corporate applications.

In this load balancing use case, WARP pairs up perfectly with Cloudflare Tunnels so that customers can place their private origins within virtual networks to help either isolate traffic or handle overlapping private IP addresses. Once these virtual networks are defined, administrators can configure WARP profiles to allow their users to connect to the proper virtual networks. Once connected, WARP takes the configuration of the virtual networks and installs routes on the end users’ devices. These routes will tell the end user’s device how to reach the Cloudflare load balancer that was created with a private, non-publicly routable IP address. The administrator could then create a DNS record locally that would point to that private IP address. Once DNS resolves locally, the device would route all subsequent traffic over the WARP connection. This is all seamless to the user and occurs with minimal latency.

How we connected load balancing to Cloudflare One

In contrast to public L4 or L7 load balancers, private L4 load balancers are not going to have publicly addressable hostnames or IP addresses, but we still need to be able to handle their traffic. To make this possible, we had to integrate existing load balancing services with private networking services created by our Cloudflare One team. To do this, upon creation of a private load balancer, we now assign a private IP address within the customer’s virtual network. When traffic destined for a private load balancer enters Cloudflare, our private networking services make a request to load balancing to determine which endpoint to connect to. The information in the response from load balancing is used to connect directly to a privately hosted endpoint via a variety of secure traffic off-ramps. This differs significantly from our public load balancers where traffic is off-ramped to the public internet. In fact, we can now direct traffic from any on-ramp to any off-ramp! This allows for significant flexibility in architecture. For example, not only can we direct WARP traffic to an endpoint connected via GRE or IPSec, but we can also off-ramp this traffic to Cloudflare Tunnel, a CNI connection, or out to the public internet! Now, instead of purchasing a bespoke load balancing solution for each traffic type, like an application or network load balancer, you can configure a single load balancing solution to handle virtually any permutation of traffic that your business needs to run!

Getting started with internal load balancing

We are excited to be releasing these new load balancing features that solve critical connectivity issues for our customers and effectively eliminate the need for a hardware load balancer. Cloudflare load balancers now support end-to-end private traffic flows with Cloudflare One. To get started with configuring this feature, take a look at our load balancing documentation.

We are just getting started with our local traffic management load balancing support. There is so much more to come including user experience changes, enhanced layer 4 session affinity, new steering methods, refined control of egress ports, and more.