包阅导读总结

1. 关键词:Gemini 1.5 Pro、漏洞检测、代码安全、生成式 AI、实验探索

2. 总结:

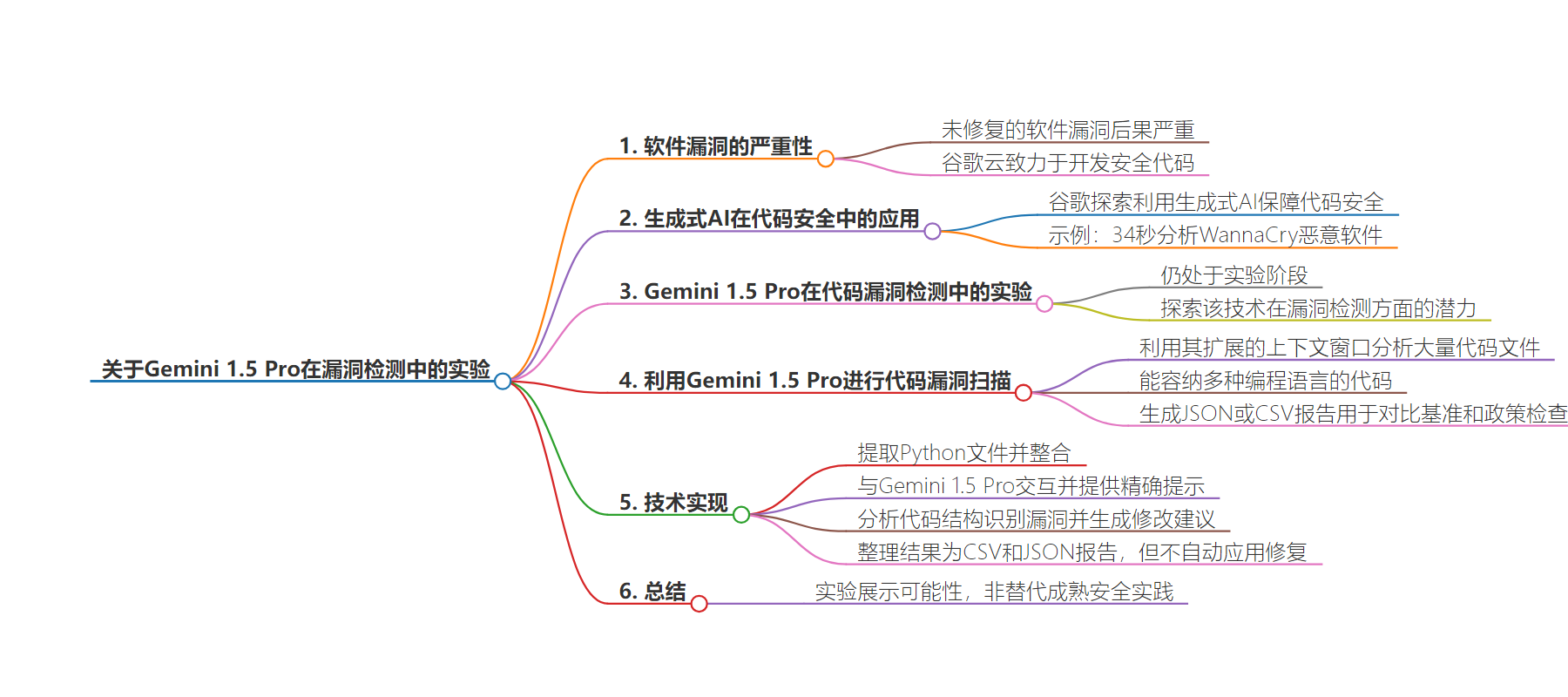

本文探讨了利用 Google 的 Gemini 1.5 Pro 进行代码漏洞检测的实验,强调未修补软件漏洞的严重性,指出虽然该技术有潜力但仍处于实验阶段,不能替代成熟的安全实践。

3. 主要内容:

– 未修补软件漏洞的后果严重,Google 重视开发安全代码

– 探索如何用生成式 AI 工具保障代码安全,如用 Gemini 1.5 Pro 助力代码漏洞检测和修复

– 介绍使用 Gemini 1.5 Pro 进行代码漏洞扫描的实验

– 利用其扩展的上下文窗口分析大量代码文件

– 能处理多种编程语言的代码,生成 JSON 或 CSV 报告

– 阐述实验的技术方法

– 提取 Python 文件并整合

– 与模型交互,通过提示工程识别漏洞和生成特定格式

– 提取和整理结果为报告,但修复并非自动应用,实验范围有限

思维导图:

文章来源:cloud.google.com

作者:Souvik Mukherjee

发布时间:2024/8/12 0:00

语言:英文

总字数:853字

预计阅读时间:4分钟

评分:84分

标签:人工智能安全,Gemini 1.5 Pro,代码漏洞检测,Google Cloud,实验性技术

以下为原文内容

本内容来源于用户推荐转载,旨在分享知识与观点,如有侵权请联系删除 联系邮箱 media@ilingban.com

Unpatched software vulnerabilities can have serious consequences. At Google Cloud, we want developers to reduce the risks they face by focusing on developing code that is secure by design and secure by default. While secure development can be time-consuming, generative AI can be used responsibly to help make that development process faster.

At Google, we’ve been exploring how gen AI tools can help secure code, which can pave the way for more secure and robust software applications. We demonstrated recently how we were able to reverse engineer and analyze the decompiled code of the WannaCry malware in a single pass — and identify the killswitch — in only 34 seconds. Using Google’s Gemini 1.5 Pro, a powerful multimodal AI model, we can help transform code vulnerability detection and remediation, and build a software vulnerability scanning and remediation engine.

While Gemini 1.5 Pro demonstrates promising capabilities in code analysis, it’s important to note that this approach is still experimental. We believe that it is important to explore the potential of this technology for vulnerability detection, and continue development and validation efforts before it can be considered a robust security tool.

Mature security solutions with established quality control and integration into CI/CD workflows are widely available and recommended for production environments. Today, we detail an experiment that aims to highlight the possibilities of generative AI in security. Please note that we do not advocate this solution as a replacement for established and proven security practices.

Exploring code vulnerability scanning with Gemini 1.5 Pro

Using Gemini 1.5 Pro to explore a potential approach to code vulnerability scanning, we can leverage its extended context window — up to 2 million tokens — to analyze large sets of code files stored in a Google Cloud Storage bucket. (In a modern CI/CD pipeline, this code would usually reside in a repository.)

The larger context window enhances the model’s ability to take in more information, resulting in outputs that are more consistent, relevant, and useful. It enables efficient scanning of large codebases, analysis of multiple files in a single call, and a deeper understanding of complex relationships and patterns within the code.

This experimental approach aims to efficiently scan large codebases, analyze multiple files in a single call, and delve deeper into complex code relationships and patternsThe model’s deep analysis of code can help ensure comprehensive vulnerability detection, going beyond surface-level flaws.

By using this approach, we can accommodate code written in several programming languages. Additionally, we can generate the findings and recommendations as JSON or CSV reports, which we would hypothetically use to make comparisons against established benchmarks and policy checks.

A deeper dive: The technical approach

All of the above is important to understand what you’re building. Now, it’s time to build it. We’ve developed a streamlined process to help you get started.

First, Python files are extracted from a specified Google Cloud Storage (GCS) bucket and consolidated into a single string to facilitate analysis. The engine then interacts with Gemini 1.5 Pro using the Vertex AI Python SDK for generative models, and provides a precise prompt to identify vulnerabilities and generate specific output formats.

By combining one-shot-inference with carefully crafted prompt engineering, Gemini 1.5 Pro can analyze the code structure to identify potential vulnerabilities within the code and suggest helpful and contextual modifications.. These findings, along with relevant code snippets, are then extracted from the model’s response and systematically organized in a Pandas DataFrame and finally transformed into CSV and JSON reports, ready for further analysis.

It’s important to note that recommended fixes are not automatically applied.

The scope of this experiment is limited to identifying issues and providing helpful and contextual modification. Automating remediations or fitting the findings into a review workflow would exist in a more mature tool, and hasn’t been considered as part of the experiment.

You can refer to the complete notebook.