包阅导读总结

1.

关键词:Microsoft、Research、Time Series、Model、Inference

2.

总结:文本是微软研究社区的系列博客文章,涵盖了新研究成果如 MG-TSD 模型、Pre-gated MoE 算法等,还有关于 AI 前沿探索的讨论及研究在新闻中的报道。

3.

主要内容:

– 研究聚焦于 2024 年 7 月 15 日这一周

– 新研究

– MG-TSD:多粒度引导扩散模型推进时间序列分析,在长时预测方面表现出色。

– Pre-gated MoE:算法系统协同设计,解决专家混合推理的挑战。

– 探讨大规模搜索评估中度量的关键因素。

– LordNet:无需模拟数据学习求解偏微分方程的高效神经网络。

– FXAM:用于预测分析的统一快速可解释模型。

– Ideas:Rafah Hosn 探索 AI 前沿。

– 微软研究在新闻中的情况:Sriram K Rajamani 谈论相关研究。

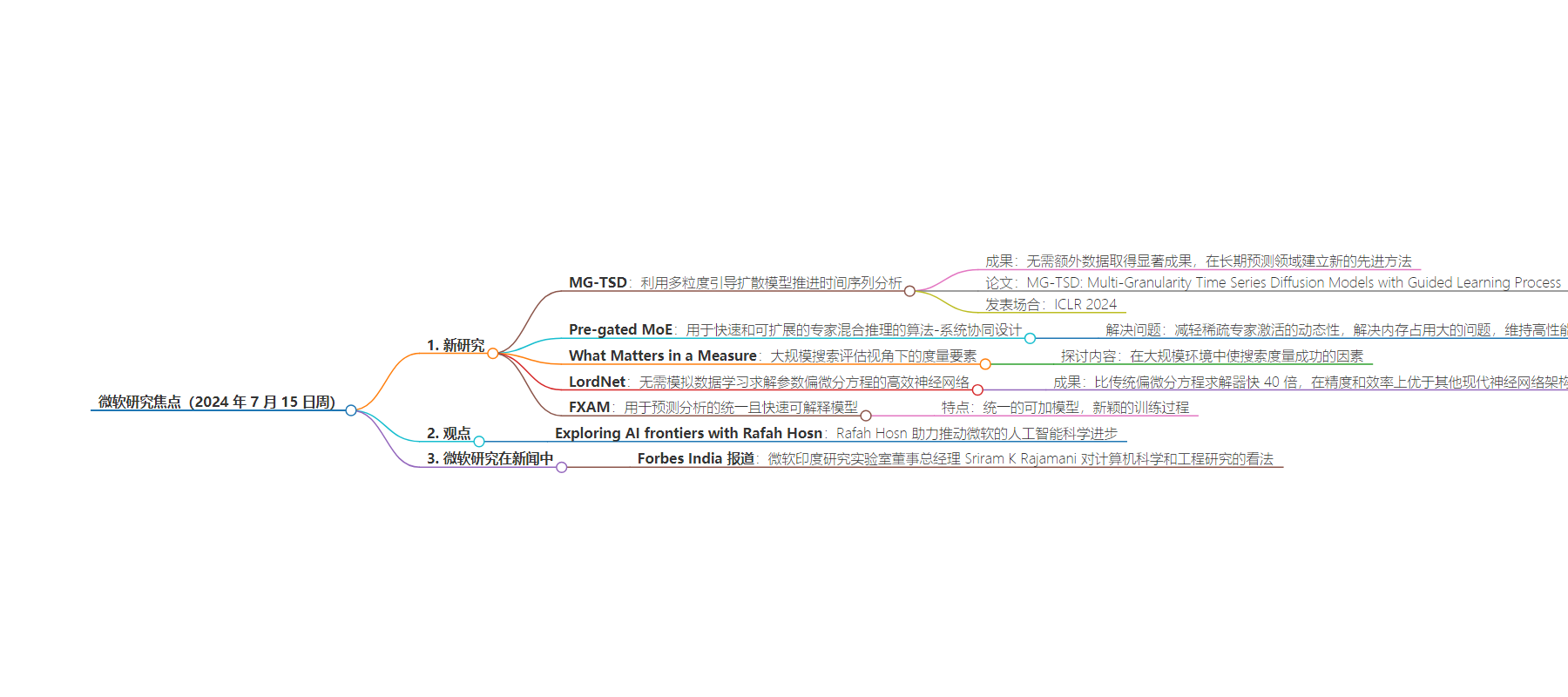

思维导图:

文章地址:https://www.microsoft.com/en-us/research/blog/research-focus-week-of-july-15-2024/

文章来源:microsoft.com

作者:Alyssa Hughes

发布时间:2024/7/17 16:00

语言:英文

总字数:1100字

预计阅读时间:5分钟

评分:86分

标签:人工智能研究,扩散模型,混合专家模型,时间序列分析,偏微分方程

以下为原文内容

本内容来源于用户推荐转载,旨在分享知识与观点,如有侵权请联系删除 联系邮箱 media@ilingban.com

Welcome to Research Focus, a series of blog posts that highlights notable publications, events, code/datasets, new hires and other milestones from across the research community at Microsoft.

NEW RESEARCH

MG-TSD: Advancing time series analysis with multi-granularity guided diffusion model

Diffusion probabilistic models have the capacity to generate high-fidelity samples for generative time series forecasting. However, they also present issues of instability due to their stochastic nature. In a recent article: MG-TSD: Advancing time series analysis with multi-granularity guided diffusion model, researchers from Microsoft present MG-TSD, a novel approach aimed at tackling this challenge.

The MG-TSD model employs multiple granularity levels within data to guide the learning process of diffusion models, yielding remarkable outcomes without the necessity of additional data. In the field of long-term forecasting, the researchers have established a new state-of-the-art methodology that demonstrates a notable relative improvement across six benchmarks, ranging from 4.7% to 35.8%.

The paper introducing this research: MG-TSD: Multi-Granularity Time Series Diffusion Models with Guided Learning Process(opens in new tab) (opens in new tab), was presented at ICLR 2024 (opens in new tab).

NEW RESEARCH

Pre-gated MoE: An Algorithm-System Co-Design for Fast and Scalable Mixture-of-Expert Inference

Machine learning applications based on large language models (LLMs) have been widely deployed in consumer products. Increasing the model size and its training dataset have played an important role in this process. Since larger model size can bring higher model accuracy, it is likely that future models will also grow in size, which vastly increases the computational and memory requirements of LLMs.

Mixture-of-Experts (MoE) architecture, which can increase model size without proportionally increasing computational requirements, was designed to address this challenge. Unfortunately, MoE’s high memory demands and dynamic activation of sparse experts restrict its applicability to real-world problems. Previous solutions that offload MoE’s memory-hungry expert parameters to central processing unit (CPU) memory fall short.

In a recent paper: Pre-gated MoE: An Algorithm-System Co-Design for Fast and Scalable Mixture-of-Expert Inference, researchers from Microsoft address these challenges using algorithm-system co-design. Pre-gated MoE alleviates the dynamic nature of sparse expert activation, addressing the large memory footprint of MoEs while also sustaining high performance. The researchers demonstrate that pre-gated MoE improves performance, reduces graphics processing unit (GPU) memory consumption, and maintains model quality.

Ideas: Exploring AI frontiers with Rafah Hosn

Energized by disruption, partner group product manager Rafah Hosn is helping to drive scientific advancement in AI for Microsoft. She talks about the mindset needed to work at the frontiers of AI and how the research-to-product pipeline is changing in the GenAI era.

Opens in a new tab

NEW RESEARCH

What Matters in a Measure? A Perspective from Large-Scale Search Evaluation

Evaluation is a crucial aspect of information retrieval (IR) and has been thoroughly studied by academic and professional researchers for decades. Much of the research literature discusses techniques to produce a single number, reflecting the system’s performance: precision or cumulative gain, for example, or dozens of alternatives. Those techniques—metrics—are themselves evaluated, commonly by reference to sensitivity and validity.

To measure search in industry settings, many other aspects must be considered. For example, how much a metric costs; how robust it is to the happenstance of sampling; whether it is debuggable; and what is incentivized when a metric is taken as a goal. In a recent paper: What Matters in a Measure? A Perspective from Large-Scale Search Evaluation, researchers from Microsoft discuss what makes a search metric successful in large-scale settings, including factors which are not often canvassed in IR research, but which are important in “real-world” use. The researchers illustrate this discussion with examples from industrial settings and elsewhere and offer suggestions for metrics as part of a working system.

NEW RESEARCH

LordNet: An efficient neural network for learning to solve parametric partial differential equations without simulated data

Partial differential equations (PDEs) are ubiquitous in mathematically-oriented scientific fields, such as physics and engineering. The ability to solve PDEs accurately and efficiently can empower deep understanding of the physical world. However, in many complex PDE systems, traditional solvers are too time-consuming. Recently, deep learning-based methods including neural operators have been successfully used to provide faster PDE solvers through approximating or enhancing conventional ones. However, this requires a large amount of simulated data, which can be costly to collect. This can be avoided by learning physics from the physics-constrained loss, also known as mean squared residual (MSR) loss constructed by the discretized PDE.

In a recent paper: LordNet: An efficient neural network for learning to solve parametric partial differential equations without simulated data, researchers from Microsoft investigate the physical information in the MSR loss, or long-range entanglements. They identify the challenge: the neural network must model the long-range entanglements in the spatial domain of the PDE, whose patterns vary. To tackle the challenge, they propose LordNet, a tunable and efficient neural network for modeling various entanglements. Their tests show that Lordnet can be 40× faster than traditional PDE solvers. In addition, LordNet outperforms other modern neural network architectures in accuracy and efficiency with the smallest parameter size.

NEW RESEARCH

FXAM: A unified and fast interpretable model for predictive analytics

Generalized additive model (GAM) is a standard for interpretability. However, due to the one-to-many and many-to-one phenomena which appear commonly in real-world scenarios, existing GAMs have limitations to serving predictive analytics in terms of both accuracy and training efficiency. In a recent paper: FXAM: A unified and fast interpretable model for predictive analytics, researchers from Microsoft propose FXAM (Fast and eXplainable Additive Model), a unified and fast interpretable model for predictive analytics. FXAM extends GAM’s modeling capability with a unified additive model for numerical, categorical, and temporal features. FXAM conducts a novel training procedure called three-stage iteration (TSI). TSI corresponds to learning over numerical, categorical, and temporal features respectively. Each stage learns a local optimum by fixing the parameters of other stages. The researchers design joint learning over categorical features and partial learning over temporal features to achieve high accuracy and training efficiency. They show that TSI is mathematically guaranteed to converge to the global optimum. They further propose a set of optimization techniques to speed up FXAM’s training algorithm to meet the needs of interactive analysis.

Microsoft Research in the news

Fobes India | June 28, 2024

Sriram K Rajamani, managing director of Microsoft Research India Lab, reflects on computer science and engineering research, including how AI and LLMs can help solve local needs. Rajamani also discusses the technical aspects of how modern AI models work, and best practices from the research lab that could apply to India’s deep tech ecosystem.

Opens in a new tab